Short-Form Video Retention Engine - Turn Viral Shorts into Teaching Templates

Drop in any TikTok, Reel, or YouTube Short whose pacing hooks you. Pick a topic you want to teach. Reelize reverse-engineers the reference's retention structure - hook timing, beat grid, scene rhythm, voice cadence - and delivers a finished short on that blueprint. Not a script. A video.

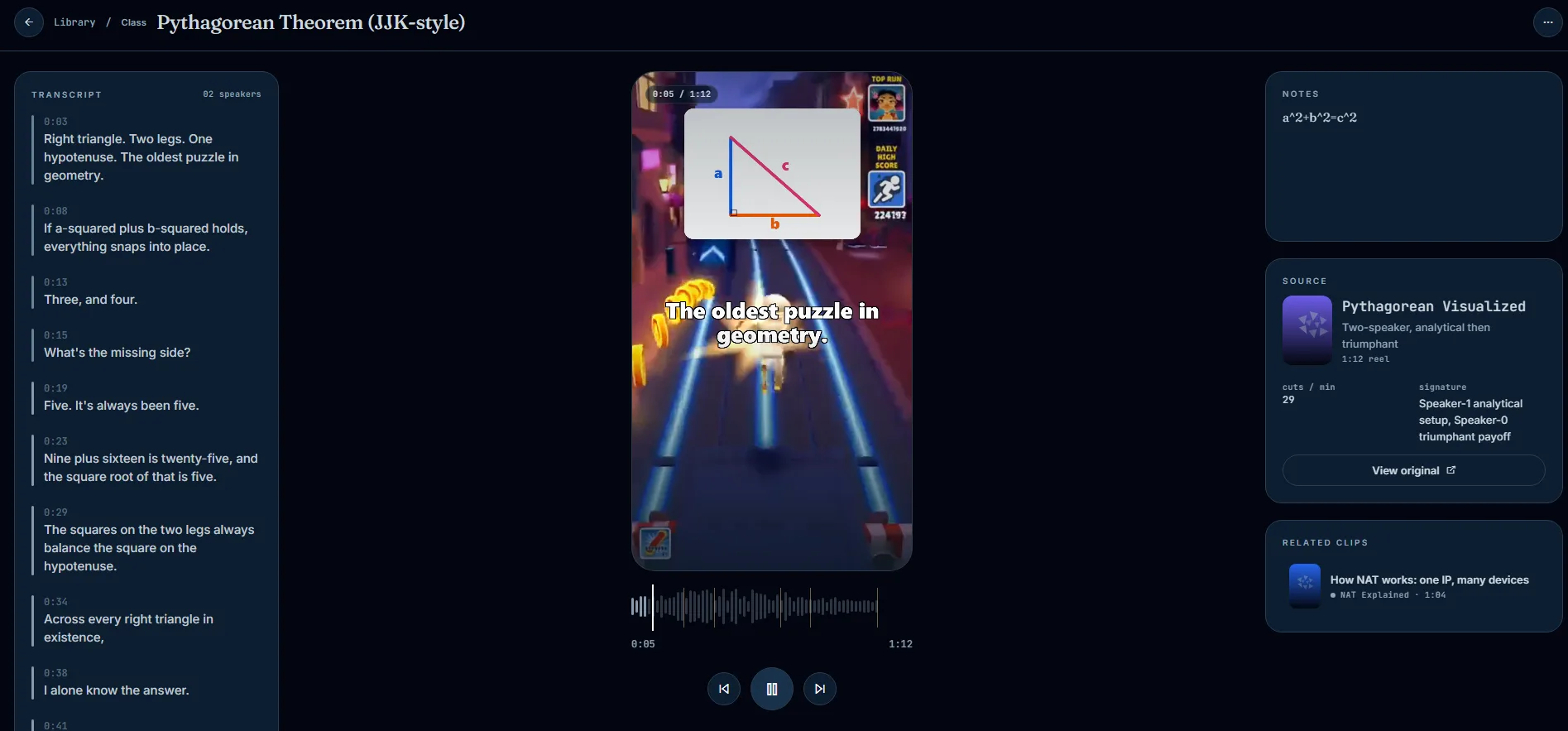

Project Overview

The Problem

Short-form video is the most effective retention format ever invented. Creators on TikTok and YouTube Shorts have engineered pacing, hooks, and beat structures that hold viewers for 60 straight seconds.

Meanwhile, educators - and anyone trying to explain a complex idea online - are still losing people in the first three seconds. The retention blueprints exist. They just aren't reusable.

Our Solution

Reelize lets you drop in any TikTok, Reel, or YouTube Short whose pacing hooks you, pick a topic you want to teach, and reverse-engineers the reference's retention structure - hook timing, beat grid, scene rhythm, voice cadence.

The output is a fully rendered short on that blueprint, delivered to your phone, ready to publish. Not a script. Not a slideshow. A finished video.

The Pipeline

ANALYZE

Demucs for audio stem separation, Whisper for transcription, Shazam for music ID, librosa for beat grid and energy envelope, pyannote for speaker diarization, and Gemini for scene detection with a keyframe refinement pass.

EXTRACT

Hook timing, pacing curve, visual rhythm, and voice cadence assembled into a single shared manifest that every downstream stage reads from.

GENERATE

Gemini writes a script aligned to the reference's beat grid (beat grid and energy envelope fed directly into the prompt). ElevenLabs synthesizes the voiceover. Music and SFX matched to the original's energy curve.

RENDER

Remotion (React-based video framework) composes the final video with layered voice, music, SFX, and footage timed to the original's beat grid at millisecond precision.

Tech Stack

Mobile + web + backend shipped in 48 hours

Client

Expo / React NativeCross-platform app handling upload, preview, and download. Signed-URL flows to Cloudflare R2 to dodge platform-specific multipart upload quirks across iOS, Android, and web.

Backend

FastAPI OrchestrationFastAPI orchestrates a serialized job queue so GPU-heavy analysis and render stages don't contend for memory. One job flows end-to-end before the next begins.

AI Models

Seven Specialized ServicesEach model specializes in one signal: stems, transcript, music ID, beat grid, diarization, scene detection, and voice synthesis. A shared manifest unifies their output.

Renderer

Remotion (React-based)Remotion composes the final video deterministically - voice, music, SFX, and footage aligned to the reference's beat grid with millisecond precision.

Infrastructure

Supabase + Cloudflare R2Supabase Postgres tracks job state across the pipeline. Supabase Storage plus Cloudflare R2 hold media assets - source videos, stems, renders - behind signed URLs.

Hard Problems

What actually took the 48 hours

Systems & Orchestration

Seven specialized services producing data in different shapes and timing spaces. Half the 48 hours went into designing a single shared manifest with retries and partial-failure recovery. The AI models were commodities; the pipeline was the product.

Running Demucs, Whisper, pyannote, and Gemini concurrently on one machine melted memory. Solved with a serialized job queue that processes one job end-to-end.

Getting a generated script to land emphasis on the reference's existing beat drops required feeding the beat grid and energy envelope directly into the LLM prompt, not just the transcript.

Signal & Rendering

Single-pass Gemini detection missed half-second cuts on fast-edited Shorts. Added a keyframe refinement pass that re-checks boundaries with pixel-level diffs.

Aligning voice, music, and footage to the millisecond inside Remotion required normalizing every timing source to a single clock before composition.

Expo file handling differs across iOS, Android, and web. Replaced direct multipart API uploads with signed-URL flows to R2.

Built With

By the Numbers

Grand Prize at Hack Brooklyn 2026

From Idea to Shipped

AI Services Orchestrated

Builders